For years, the big promise of AI in biology was interpretation. Models could read papers, analyze genomic data, classify images, and suggest hypotheses faster than any human team. Over the last two weeks, the story has started to feel more concrete. The frontier is no longer just AI that understands biology. It is AI that can participate in the experimental loop itself, proposing tests, learning from the results, and steering the next round of lab work. That shift became especially visible this month through new reporting on autonomous biology experiments and through continued discussion around models that can now generate short genomic sequences.

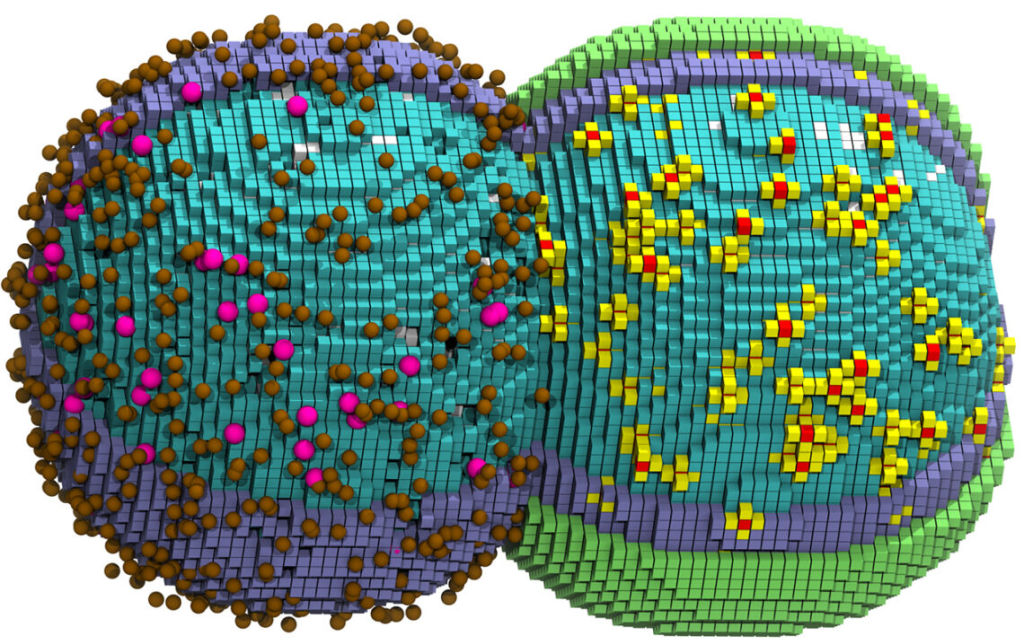

The clearest example came from OpenAI and Ginkgo Bioworks. In work highlighted by both OpenAI and Scientific American, GPT 5 was connected to Ginkgo’s cloud laboratory to optimize cell free protein synthesis, a widely used method for making proteins without living cells. According to OpenAI, the system ran more than 36,000 unique reactions across 580 automated plates and achieved a 40 percent reduction in protein production cost, with a 57 percent improvement in reagent cost. Scientific American described the broader significance well: this was not just a chatbot commenting on biology, but an AI system designing experiments, receiving data back from a robotic lab, and iterating at a speed that would be difficult for a human team to match.

That matters because biology has always resisted the hype cycle that dominates other areas of AI. In coding or mathematics, answers can often be checked quickly. In biology, the real bottleneck is usually experimentation. Wet lab work is slow, expensive, noisy, and full of physical constraints. If AI can meaningfully reduce the cost and time of iteration, the impact could spill into drug discovery, diagnostics, synthetic biology, and biomanufacturing. Cell free protein synthesis may sound niche, but proteins sit at the center of modern therapeutics, diagnostics, enzymes, and research tools. Lowering the cost of making and testing them is not a side improvement. It changes how fast real science can move.

At the same time, another strand of the story is developing on the design side. Nature reported on March 4 that the Evo 2 genomic language model can generate short genome sequences, although researchers quoted in the piece stressed that there is still a major gap between writing plausible DNA strings and creating genomes that function reliably inside living cells. That distinction is important. It shows how quickly the field is moving while also reminding us that biological reality is still the final judge. AI can now propose increasingly sophisticated biological designs, but living systems remain far more complex than text, images, or code.

This is exactly why the most interesting development is not raw model capability on its own. It is the coupling of models to instruments, protocols, and validation layers. OpenAI’s writeup makes clear that the experimental loop included strict programmatic checks so the AI could not submit experiments that looked good in text but could not actually run on the automation platform. Scientific American also reported an instructive failure case, where the model tried to assign a negative amount of water when exploring a new condition space. That is not a trivial anecdote. It is a reminder that useful AI in medicine and biology will depend on constraints, guardrails, and interfaces to the physical world. Real progress is going to come from systems that are not only creative, but also grounded.

There is also a necessary caution here for anyone tempted to treat every impressive accuracy number as biological understanding. A University of Warwick study released on March 2 warned that some AI pathology models may rely on shortcuts and confounding signals rather than truly detecting the underlying biology they claim to measure. In other words, a model can perform well on paper while still learning the wrong lesson. That warning lands at exactly the right moment. As AI tools move deeper into medicine, the question is no longer whether they can generate plausible outputs. The real question is whether they are discovering meaningful biological structure or only exploiting correlations that break when conditions change.

That tension is what makes this moment worth writing about for a general audience. We are watching AI in biology become more physical, more operational, and more useful, but also more exposed to the discipline of reality. The next phase will not be won by the model that sounds smartest in a demo. It will be won by systems that can survive the messiness of experiments, the variability of cells and tissues, and the rigor required for medical evidence. If the last era was about AI reading biology, the next one may be about AI doing biology, one validated experiment at a time.

Sources

https://openai.com/index/gpt-5-lowers-protein-synthesis-cost/